Most paid media teams would say they test creative. Very few actually have a system for it.

There’s a difference between running two ads and seeing which one gets more clicks, and building a structured process that consistently surfaces winning creative, scales it intelligently, and knows when to move on. The first is guesswork with extra steps. The second is how brands compound their paid media performance over time.

Here’s what ad creative testing actually is, where most teams go wrong, and how to build a process that works.

What Is Ad Creative Testing?

Ad creative testing is the process of systematically comparing different versions of your ads, specifically the visuals, copy, hooks, format, and calls to action, to identify which elements drive the best performance for your goals.

It’s different from what a lot of teams call A/B testing. True A/B testing isolates a single variable between two otherwise identical ads. Most in-platform ‘creative testing’ is looser than that: multiple variables change at once, samples are too small, and tests end before they’ve collected enough data to mean anything.

There are two modes of creative testing worth distinguishing. Pre-launch concept testing evaluates whether a creative direction is worth pursuing before you spend money producing it. Live performance testing runs actual ads in the platform and measures what happens in the real world with real users.

The best teams use both. Pre-launch testing saves production budget. Live testing gives you ground truth.

Why Most Teams Get It Wrong

The most common mistake isn’t failing to test. Most teams test something. The most common mistake is testing too many variables at once.

When you change the headline, the image, and the CTA at the same time, and one version wins, you don’t know which change made the difference. The result is a win you can’t explain and can’t repeat. The one-variable rule exists for exactly this reason: isolate one element per test, so you know what you actually learned.

The second most common mistake is ending tests too early. There’s a human instinct to call a winner the moment one version pulls ahead, but ad performance early in a test is noisy. Without enough data, you’re just reading randomness.

As a rule of thumb, I look for a creative to have received spend equivalent to roughly three to five times the average cost per conversion before calling it. If your CPA is $20, you want at least $60 to $100 spent before drawing conclusions. At the high end, once a creative has received around $500 in spend, you have a reasonably solid read.

The third mistake is at the opposite end of the volume spectrum: testing too much. I see this just as often now as undertesting. Teams decide they need to pump out 50 or 100 creative variants a month, prioritize volume over quality, and end up with a lot of sloppy work they can’t learn from.

Producing creative that isn’t well-considered means the platform spends a tiny budget on it, you can’t get enough signal to conclude anything, and you’ve wasted the production effort. Volume matters. Quality matters more.

The Creative Testing Hierarchy

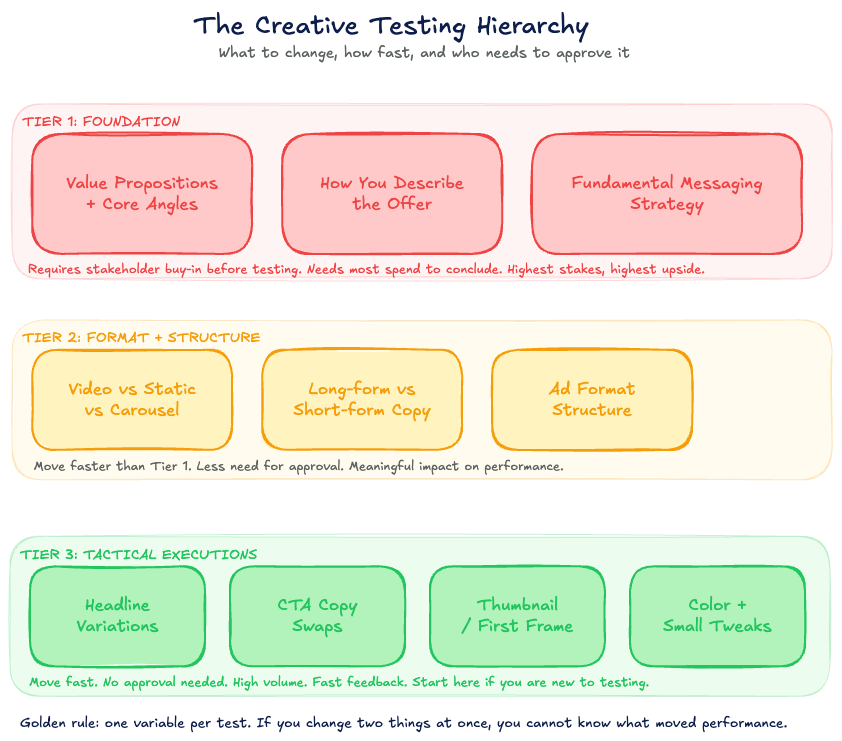

Not all creative changes are equal. Some decisions require more stakeholder alignment and more evidence before you act on them. Others can be made quickly and unilaterally.

Thinking about your creative tests as a hierarchy helps you prioritize and move faster.

At the base of the pyramid are the most foundational elements: value propositions, angles, and core messaging. These are the decisions about how you describe your product or offer. Does this resonate with your audience?

Does it align with how the brand wants to be perceived? These tests take longer, require more spend to conclude, and usually need the client or brand lead to sign off before you run them. They’re high-stakes and high-reward.

In the middle of the pyramid are format and structural choices: video versus static, carousel versus single image, short-form versus long-form copy. These are meaningful tests but don’t require the same level of stakeholder buy-in. You can move faster.

At the top of the pyramid are tactical executions: headline variations, CTA copy, color choices, thumbnail frames. These are low-commitment, fast-feedback tests. You don’t need to run them by anyone.

You just run them.

The point of the hierarchy isn’t to slow you down at the top level. It’s to help you think clearly about what kind of decision you’re making and how much evidence you actually need before acting on it.

How to Set Up a Creative Test

Effective creative testing setup comes down to three things: isolating the right variable, giving the test enough budget to generate signal, and knowing what metric you’re actually optimizing for.

On budget per variant: as a rule of thumb, each creative variant should receive at minimum three to five times your average CPA. If your cost per conversion is $100, you need $300 to $500 per creative before you can draw a meaningful conclusion. Going under that threshold is common, and it’s why so many teams have a graveyard of ‘inconclusive’ tests.

On what to measure: the metric you use to call a winner should match the goal of the campaign. For conversion campaigns, use CPA. For lead gen, use cost per qualified lead.

CTR alone is a dangerous proxy because high CTR with low conversion is a common pattern for ads that generate curiosity without generating intent.

On runtime: rather than setting a fixed number of days, use spend as your trigger. Let the test run until each variant has received enough budget to generate a meaningful sample. Seven days is a common default, but a slow-spending campaign may not have enough data in seven days.

Follow the spend, not the calendar.

What to Test First

If you’re building a testing program from scratch, the question of what to start with matters. The answer is: the element most likely to have the biggest impact on performance.

In most paid social accounts, that’s the hook. The first three seconds of a video or the first line of copy does the most work. If the hook doesn’t stop the scroll, nothing else about the creative matters.

Testing hook variations gives you fast feedback and outsized leverage.

After hooks, in rough order of impact: visual format (static versus video versus carousel), core value proposition angle, and CTA copy. Spend the most energy testing the elements closest to the top of that list.

How to Scale a Winner

Finding a winning creative is only half the process. Scaling it without killing it is the other half.

The most common mistake at this stage is increasing budget too aggressively, too fast. When you spike spend on a winning ad, you often accelerate its fatigue, burn through your retargeting pool, and end up worse off than before you scaled.

A better approach: once a creative has demonstrated strong performance at a moderate budget, increase spend incrementally rather than all at once. Simultaneously, start building the next round of tests around variations of the winning elements. The creative that’s working today will fatigue.

Your job is to be ready with what’s next before that happens.

Creative Testing for High-Consideration Products

Everything above applies to most paid social programs. But there’s an important adaptation for brands in high-consideration verticals, whether that’s education, software, home goods, or anything where someone doesn’t typically buy the first time they see an ad.

With high-consideration products, your cost per conversion is higher, which means you get fewer data points per dollar spent and your testing cycle is slower. The way to counteract this is to test against a lower-commitment offer first.

A free guide, a webinar, a program info download, anything that captures a lead without asking for full commitment gives you a faster feedback loop. You can use that faster feedback to develop conviction about which creative angles work, then apply those to your main conversion campaigns. Lead capture becomes a creative validation tool as much as a pipeline tool.

The Role of AI in Creative Testing

AI tools are changing how teams approach creative testing in two meaningful ways.

First, AI can help generate more variants faster. Tools that analyze your top-performing ads and produce copy variations or concept suggestions compress the ideation phase significantly. The output isn’t always great, but the speed-to-draft benefit is real.

Second, platforms like Meta’s Advantage+ are taking over more of the delivery optimization layer. This is generally good for performance, but it changes what ‘creative testing’ means in practice. When Meta is auto-optimizing delivery across your creative pool, individual A/B tests become harder to run cleanly.

You need to understand how the platform’s own testing infrastructure works and adapt your methodology to it.

What AI and platform automation don’t replace is creative judgment. Knowing which angles are worth testing, which hooks are genuinely differentiated, and which visual directions fit the brand takes human expertise. The tools are faster.

The strategy is still yours.

Building a Testing Cadence

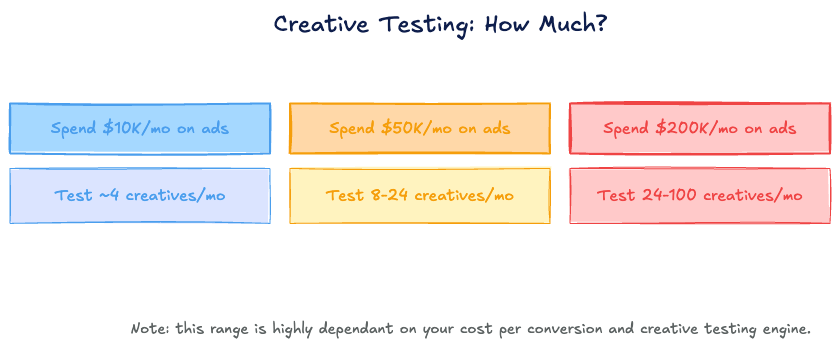

How many creatives should you be testing per month? The honest answer is that it scales with your ad spend and inversely with your cost per conversion.

For brands spending around $10,000 per month on paid social, testing 4 creatives per month is a reasonable starting point. At $50,000 per month, 8 to 24 is a workable range. At $200,000 per month, the range opens up to 24 to 100, though where you land depends heavily on your CPA and production capacity.

Keep in mind: these aren’t all brand new concepts. A mix of new angles (entirely new creative directions) and new variants (iterations on existing concepts, different hooks, different copy treatments) is the norm. Not every test has to be a new idea.

Some of your best tests will be systematic variations on what’s already working.

Frequently Asked Questions

What is ad creative testing?

Ad creative testing is the process of systematically comparing different versions of ads to identify which creative elements drive the best performance. It covers visuals, copy, hooks, format, and calls to action, and uses live platform data to determine winners rather than relying on opinions or gut instinct.

How do you test ad creative?

Set up campaigns with one variable changed between versions, give each variant enough budget to generate statistical signal (typically three to five times your average cost per conversion), and measure performance against the goal of the campaign. Use spend as your test duration trigger, not a fixed number of days.

What is A/B testing for ad creatives?

A/B testing for ad creatives means running two versions of an ad simultaneously with only one element different between them: the image, the headline, the CTA, or another single variable. The goal is to isolate what actually drove the performance difference. Testing multiple changes at once produces inconclusive results.

How does ad testing influence creative development?

Over time, a structured testing program builds a body of evidence about what resonates with your audience. Winning hooks, formats, and value proposition angles inform the next round of creative development. The testing program becomes a feedback loop that continuously improves creative quality rather than relying on intuition.

What should you ask when testing ad creative?

The key questions: What single variable am I testing? Do I have enough budget per variant to generate real signal? What is the metric I’m using to call a winner?

Is the performance difference large enough to be meaningful, or is it within normal variance?