Key Takeaways

- Meta’s Advantage+ has made audience testing functionally obsolete. Creative is now the primary testing lever in paid social.

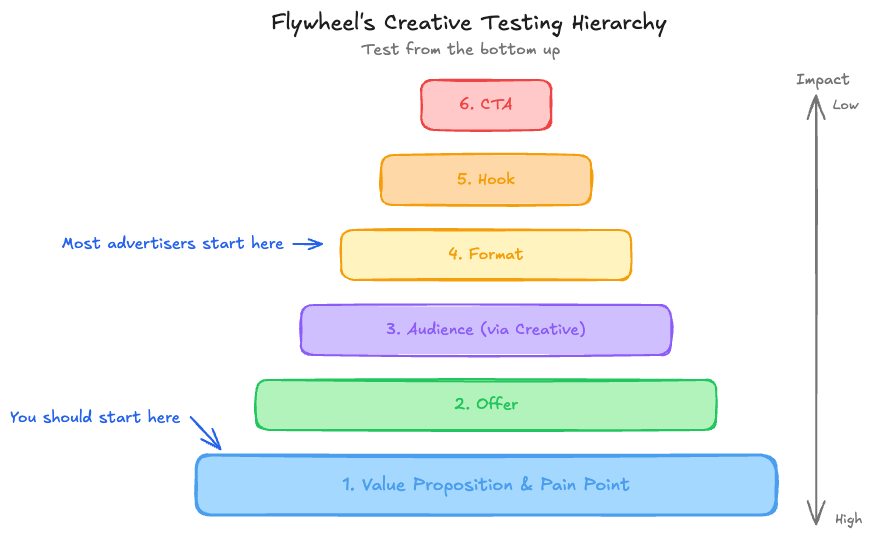

- A testing hierarchy should start with value proposition and pain point at the base, then work up through offer, audience, format, hook, and CTA.

- Allocate 15-50% of your budget to testing, depending on your cost per conversion and how much proven creative you already have.

- Most advertisers kill winning creative too fast because they’re tired of seeing the ad, while their actual audience has barely seen it.

Why Creative Is Now the Primary Testing Lever

A few years ago, paid social testing budgets were split across multiple levers. You’d test different audiences, different lookalike seeds, broad versus narrow targeting, and different creatives across those audiences. The testing budget had to stretch in a lot of directions.

That world is largely gone.

Meta’s Advantage+ campaigns and their broader product direction have systematically removed manual audience controls. They’re discouraging audience testing, and frankly, the results back them up. For most advertisers, a simple broad audience or a basic lookalike, at least at the prospecting level, outperforms elaborate audience segmentation.

What this means practically is that audience as a testing variable is functionally extinct for most accounts. The testing budget that used to be split across audiences and creative can now be focused entirely on creative.

But Meta hasn’t just shifted the importance to creative. They’ve changed how creative works mechanically. Meta’s algorithm now reads your creative visually and contextually.

It’s not just treating your ad as an image or video file. It understands whether the ad shows a person holding a water bottle, a student studying, a child versus an adult. When someone converts on a specific creative, that sends a signal to Meta: this type of person responds to this type of creative.

Find more people who would respond similarly.

Every creative you test is simultaneously a creative test and an audience discovery tool. That’s a fundamental shift from the old model, where audience and creative were independent variables.

Creative Decision Framework

At Flywheel Digital, we use a decision-making framework called hats, haircuts, and tattoos. A hat is a decision that’s easily reversible, low-risk, and cheap to experiment with. A haircut takes more strategic foresight and you can’t do it every week.

A tattoo is a decision that, once made, is very difficult to undo.

This framework applies directly to creative testing, and it’s one of the most useful lenses for deciding what to test and how aggressively.

Hats: Most Creative Tests

The overwhelming majority of creative variants should be treated as hats. You’re trying on a new look. If it works, great, keep wearing it.

If it doesn’t, take it off and try another. New image styles, different headline approaches, alternative video hooks: these are all hats. As the cost of creative production drops (thanks in large part to AI-assisted workflows), the threshold for “worth testing” keeps getting lower.

The simple rule: if you wouldn’t be embarrassed to run it and it doesn’t contradict your brand, try it on.

Haircuts: Promotions and Offers

Promotional creative, think sales, scholarships, first-purchase discounts, and limited-time offers, falls into the haircut category. These can sharpen your performance significantly when deployed at the right time. But you can’t run promotions constantly without training your audience to wait for the next deal.

Haircuts need spacing. Your hair needs time to grow back. If you run a 20% off campaign every two weeks, you’re not running promotions anymore.

You’re just cheaper. And once your audience is conditioned to wait for the discount, you’ve burned through your intent and your brand equity in one move.

Tattoos: Brand-Level Messaging

Anything that fundamentally repositions how your audience perceives your brand is a tattoo. Running a batch of ads that aggressively push “cheapest option on the market” when your brand positioning is premium quality? That’s a tattoo.

You can technically remove it, but the impression lingers.

Before testing any creative concept, ask: would running a hundred impressions of this ad damage our brand positioning if the performance doesn’t work out? If yes, reconsider. If no, it’s probably a hat.

The Creative Testing Hierarchy

Most advertisers structure their creative tests from the wrong end. They start by testing formats (images versus video), hooks (first three seconds), and calls to action. These are the most visible, surface-level elements, which is exactly why people default to testing them first.

At Flywheel, we use a testing hierarchy that works from the base up, prioritizing the variables that have the biggest impact on ad performance before refining the details.

Level 1: Value Proposition and Pain Point. At the base of the pyramid is the fundamental marketing question: what problem are you solving, and why should anyone care? If you haven’t validated that the core value proposition resonates, testing hooks and CTAs is premature. A surprising number of accounts spending $50K+ per month on Meta have never explicitly tested whether their audience responds better to “save time on meal planning” versus “eat healthier without the effort” versus “stop wasting food every week.” Those are three different value propositions, and one of them will significantly outperform the others.

Level 2: Offer. The offer is what someone actually gets from engaging with the ad. Are they learning about a course? Getting a free trial?

Receiving a discount? The offer sits one level above value proposition because the same value proposition can be expressed through different offers.

Level 3: Audience (via Creative). In the new world of Advantage+, “audience testing” through creative means speaking to specific audiences directly in the ad itself. “Hey, gardeners, this shoe is perfect for you.” “Training for a triathlon? This is what you need.” You’re not changing targeting settings.

You’re changing who the creative speaks to, and letting Meta’s algorithm find those people.

Level 4: Format. Images versus video, carousel versus single image, UGC-style versus polished production. Format testing lives here, not at the base of the pyramid, because format matters less than what you’re saying and to whom.

Level 5: Hook. The first three seconds of a video or the headline of an image ad. By this point, you’ve already validated the value proposition, offer, audience, and format. Now you’re optimizing the entry point.

Level 6: Call to Action. The CTA, “Learn more,” “Shop now,” “Get started,” is the smallest lever at the top of the pyramid. It matters, but test everything below it first.

Most advertisers invert this and start with format, hook, and CTA at the bottom of their pyramid. That’s why they spend months testing without finding meaningful performance gains. They’re optimizing the least impactful variables first.

How to Size Your Testing Budget

When building a creative testing program, start with three variables: how much proven creative you already have, your total monthly budget, and your cost per conversion.

Proven Creative Ratio. If you’re a brand-new advertiser or entering a new platform, everything is a test. Your testing allocation is effectively 100% until you find what works. If you’ve been running ads for a while and have a stable of proven performers, your testing budget should typically fall between 15% and 50% of total spend.

The exact number depends on your profitability targets and appetite for risk. More aggressive brands push closer to 50%. Conservative brands with tight margin requirements stay closer to 15-20%.

Cost Per Conversion Drives Creative Volume. This is the variable most advertisers overlook. If your cost per conversion is $200 (common in education or B2B), you need more testing budget to generate enough data points to draw conclusions. A $5,000 test budget only gives you 25 conversions worth of data.

If your cost per conversion is $20 (common in e-commerce), that same $5,000 generates 250 data points. You can make decisions faster with less budget.

Work backward from your cost per conversion to determine how many creatives you can meaningfully test in a given month. It might be 4. It might be 30.

Both are valid at $50K/month depending on the economics.

To see how Flywheel builds paid social programs for consumer brands, check out our approach to scaling ad creative alongside performance.

When to Retire a Winning Ad

Deciding when to retire a high-performing ad is one of the hardest judgment calls in paid social, and one of the decisions we have the most internal conversations about at Flywheel.

The data signals to watch for are rising cost per conversion over time, declining click-through rates, and increasing frequency metrics. When a creative that used to convert at $30 is now consistently converting at $50, and newer creatives in your testing campaign are outperforming it, that’s a clear signal.

The ideal way to retire a winner is gradually. As you ship new test winners into your evergreen campaign, the original winner naturally fades in relative performance and spend. Meta’s algorithm starts allocating more budget to the newer, higher-performing creatives.

The old winner dies of natural causes rather than being pulled off life support.

But often that transition doesn’t happen cleanly. Meta likes showing ads with a proven track record, so the legacy creative keeps getting spend even when newer options might perform better.

Here’s the critical bias to watch for: most advertisers kill winners too fast.

As the person running the campaigns, you see your own ads constantly, both in the platform and often in your own social feed. After months of looking at the same creative, you get sick of it. Your instinct is to assume everyone else is sick of it too.

But check the frequency data. If the ad’s frequency is 2 in a 30-day window, your audience has barely seen it. You’ve seen it 500 times.

They’ve seen it twice. Your fatigue is not their fatigue. Before pulling a creative winner, always check the audience-side frequency, not your own gut reaction.

Where AI Belongs (and Doesn’t Belong) in Creative Production

AI now plays a role in every stage of what we call the creative assembly line at Flywheel. That assembly line starts with figuring out how many creatives to produce, moves through competitive research and idea generation, continues into brief writing, and ends with final design production.

AI has a legitimate place in every single one of those stages. It can accelerate research, generate concept options, draft initial briefs, and produce design mockups and variations at a pace that would have been impossible two years ago.

Where AI is the least helpful today is that final step: producing finished, production-ready ad creative. AI-generated images and videos are improving rapidly, and there are systems that can produce decent variants. But for brands that take their visual identity seriously, AI output still tends to feel generic.

It’s much better at producing mockups and concepts than it is at producing the final asset you’d actually run.

That will change, probably faster than most people expect. But for now, the rule is straightforward.

The corollary to “AI everywhere in the process” is that nobody should ever feel like they’re getting an AI ad. Not your audience, not your designer, not your client, not your boss. When AI is used well, it’s filtered through human taste and judgment, built with enough context and constraints that the output doesn’t sound or look generic.

If something feels like AI slop (and yes, that term has its own Wikipedia page now), that’s the signal to intervene, even if AI was legitimately helpful at every prior stage of the process.

Frequently Asked Questions

How much budget do I need for creative testing?

There’s no fixed dollar amount. The right testing budget depends on your cost per conversion, how much proven creative you already have, and your profitability constraints. A useful starting range is 15-50% of your total ad spend.

Work backward from your cost per conversion to determine how many creatives you can meaningfully test each month.

Should I still test audiences on Meta in 2026?

For most advertisers, manual audience testing has been largely replaced by Advantage+ and broad targeting. Instead of testing audiences through targeting settings, test them through creative: create ads that speak directly to specific audience segments and let Meta’s algorithm find the right people. This approach consistently outperforms manual audience segmentation for most account types.

How many creatives should I test per month?

This depends entirely on your budget and cost per conversion. At $50K/month with a $20 cost per conversion, you might test 20-30 creatives. At $50K/month with a $200 cost per conversion, you might only test 4-8 meaningfully.

The key is having enough data points per creative to make statistically sound decisions.

What’s more important: the creative format or the message?

The message wins every time. Our testing hierarchy places value proposition, offer, and audience targeting (through creative) below format, hook, and CTA. Nail the message first, then optimize how it’s delivered.

The most beautifully produced video ad with the wrong value proposition will lose to a simple static image with the right one.

How do I know when a creative test has enough data?

A common rule of thumb is to wait for at least 50 conversions per creative variant before drawing conclusions, though lower thresholds can work for directional signals. If your cost per conversion is high, that may take weeks or require a larger budget allocation. The key metric is statistical confidence in the performance difference, not simply time elapsed.